For decades, the enterprise has been haunted by the ghost of “legacy.” We’ve been told that the core logic of our businesses—the trillions of rows of data locked in 60-year-old COBOL files—is a liability, a frozen asset too fragile to touch and too complex to modernize. But as a digital transformation strategist, I see a different reality. This isn’t technical debt; it is the untapped IQ of your organization.

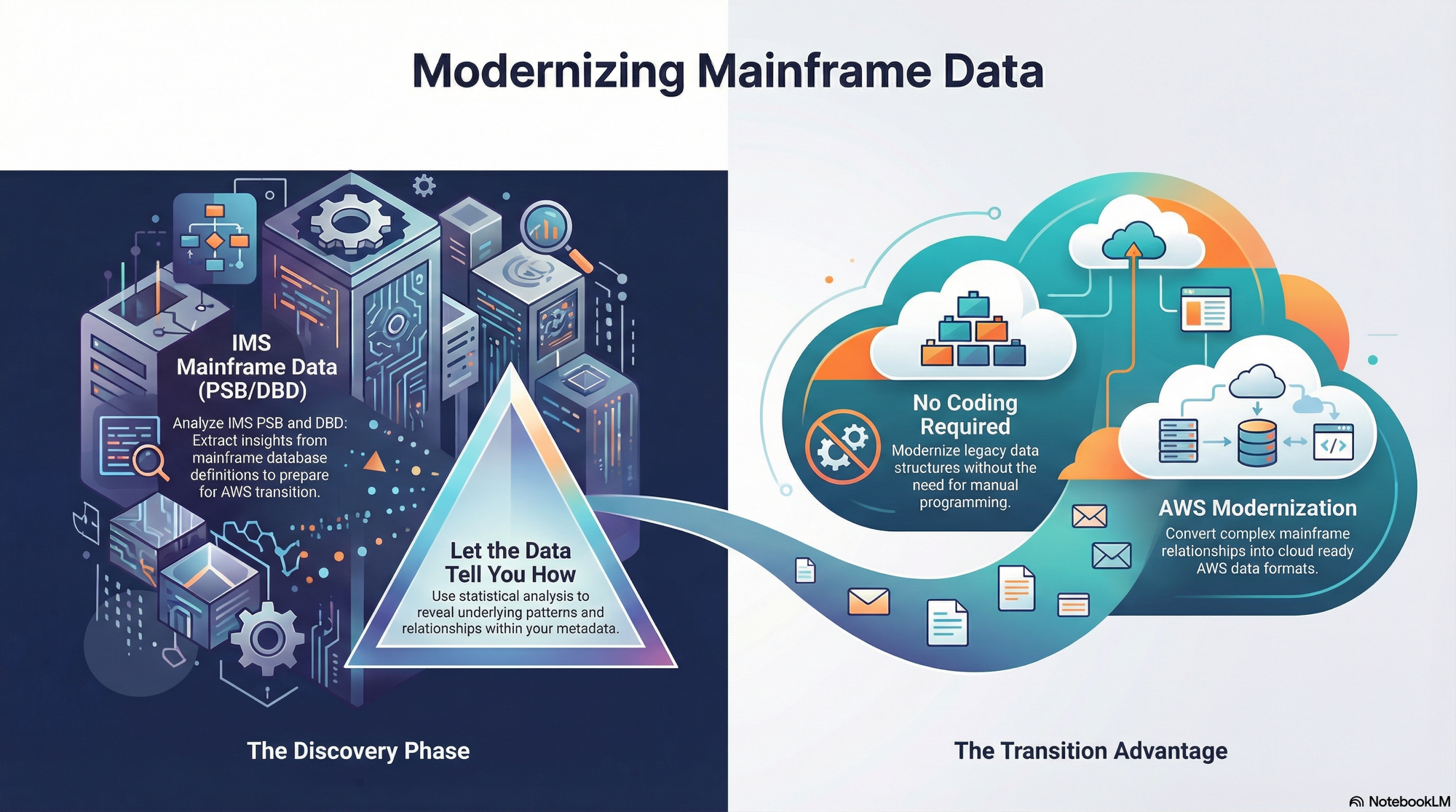

The “Legacy Logic” framework is shattering the traditional modernization roadmap. By leveraging Metadata Garage Services, the bridge between the mainframe and the frontier of AI has become remarkably short. We are no longer talking about a multi-year migration nightmare; we are talking about a fundamental shift in mindset that turns a “static garage” of records into a high-velocity AI Intelligence Hub.

The Zero-Refactor Revolution

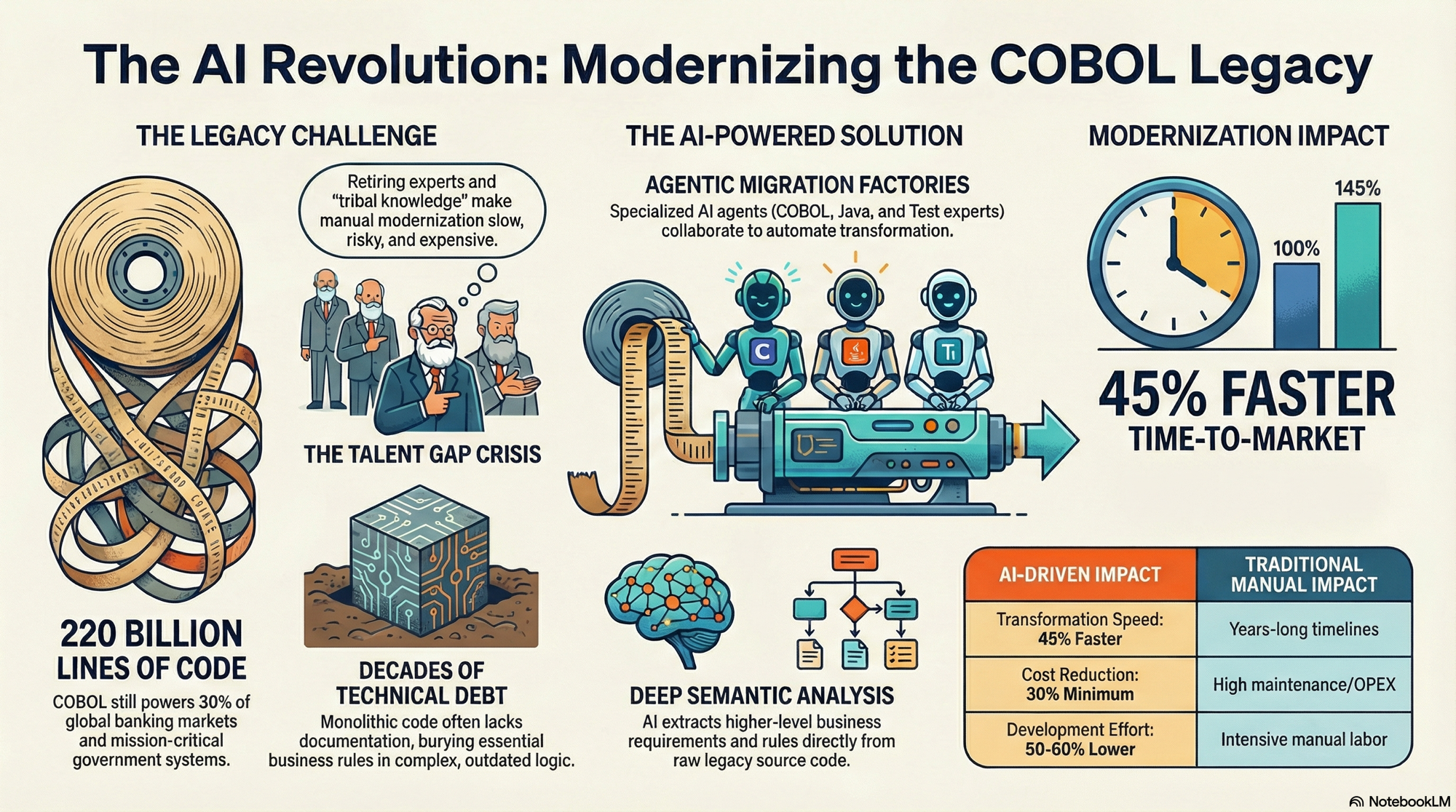

The single greatest barrier to innovation is the “Prep-Work Myth.” Conventional wisdom dictates that before AI can even glance at legacy data, you must endure years of refactoring, manual coding, and grueling data normalization. For most CIOs, touching the legacy core is a high-stakes risk that threatens the very stability of production environments.

Metadata Garage Services provides the ultimate “read-only” path to intelligence, effectively breaking the shackles of technical debt without jeopardizing the system of record. The mandate is clear: you can now move toward “AI from your COBOL files with no coding, requirements, or preparation.”

By removing the need for manual intervention or system overhauls, we shift the culture of the IT department from “maintenance and defense” to “innovation and insight.” You don’t need to rewrite your history to benefit from the future; you simply need the right interface to access it.

The Automated On-Ramp: From Blind Storage to Statistical Clarity

Every failed digital transformation starts with messy data. In the legacy world, COBOL files are often “black boxes”—raw records that offer zero visibility to modern tools. To an LLM (Large Language Model), an unmapped mainframe file is just noise.

This is where the “Legacy Logic” tools provide an essential on-ramp. By processing COBOL data files and gathering automated statistics, these tools create a comprehensive “context map” of your historical data. We are moving from blind storage to instant visibility, transforming raw records into a viable, structured starting point for intelligence. This statistical baseline is the “ground truth” that allows an AI to navigate decades of enterprise memory with precision. It turns what was once “dark data” into a clear, searchable asset before a single prompt is even written.

Conversational IQ: Turning Records into an Intelligence Hub

The true “Mindset” shift occurs when we stop viewing data as a report and start viewing it as a conversation. Through the integration of processed records into NotebookLM, we are creating a sophisticated AI Intelligence Hub that fundamentally changes how stakeholders interact with the past.

Imagine the power of moving away from a COBOL programmer writing a batch report that takes three days to execute. Instead, a CEO or Product Manager can ask a natural language question: “Compare our highest-performing insurance riders from 1985 against current market trends—what logic are we missing?”

By loading legacy records into a conversational notebook environment, the data is no longer a static archive; it is a live participant in strategic decision-making. This workflow turns the “Legacy Garage” into a fountain of insights, allowing the enterprise to “talk” to its history through a 21st-century interface.

The Future of the Mainframe

The transition from COBOL to AI is not about replacement; it is about liberation. Metadata Garage Services proves that the mainframe can remain a foundational asset while its data is freed to fuel modern competitive advantages. By automating the extraction and statistical mapping of legacy files, we bridge the gap between the mid-20th-century engine and the AI-driven future.

The technical hurdles have been cleared. The only remaining question is one of vision: What transformative insights are currently hidden in your own legacy “garage,” just waiting to be uncovered?